Instead of treating an LLM as a one-time response engine, agentic design introduces structured loops, intermediate checkpoints, and task-oriented decision making. The result is not just longer output, but better execution. In practice, that means more reliable analyses, stronger reports, more robust code generation, and workflows that can react to changing conditions.

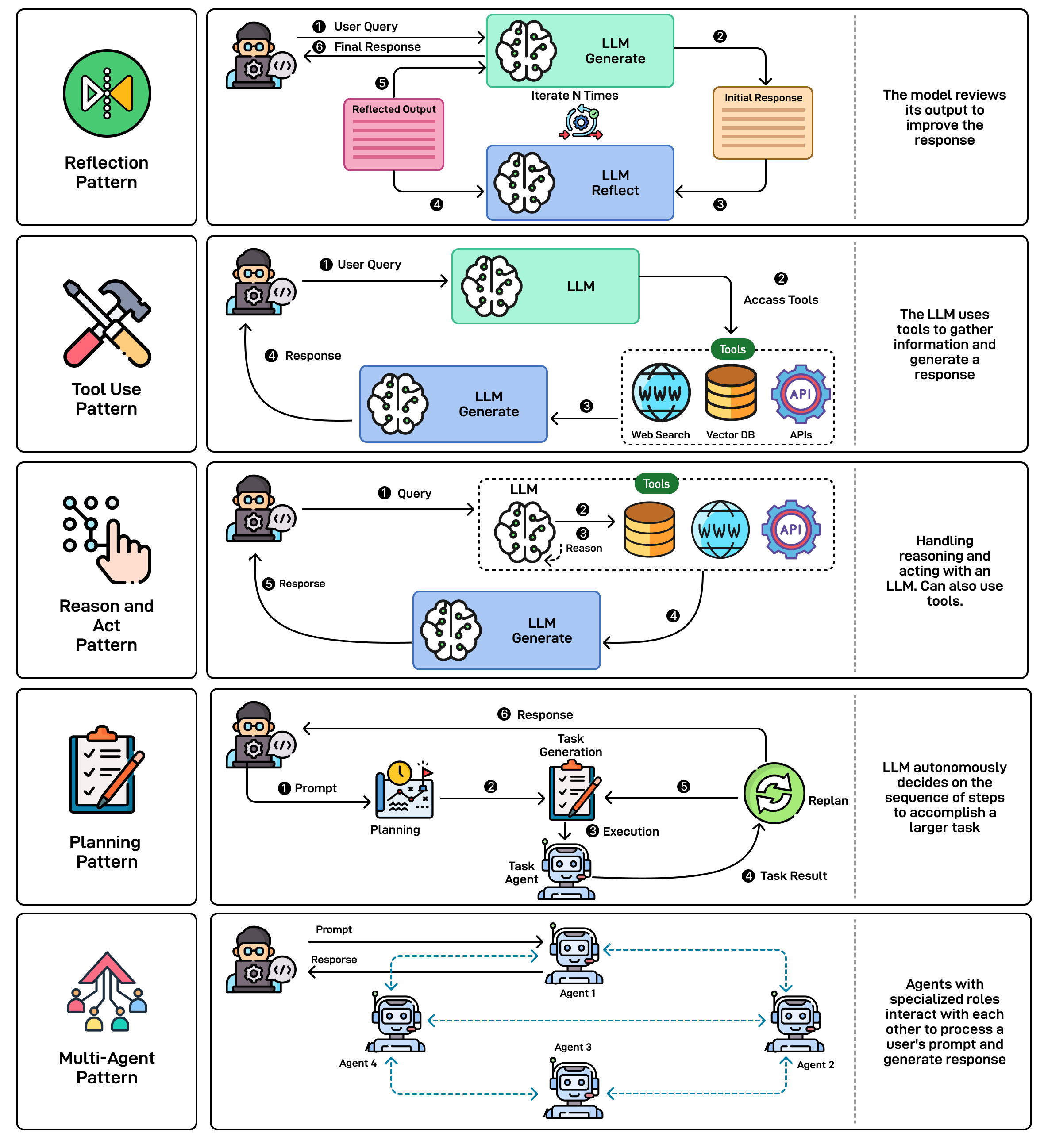

In this article, we will go through five foundational workflow patterns that appear again and again in modern agent systems: reflection, tool use, ReAct, planning, and multi-agent collaboration.

Core design patterns for agentic workflows

Understanding Agentic Workflows

An agentic workflow is designed to do more than answer a prompt. It gives the model a structured way to decide what to do next, how to evaluate progress, and when to change course. In other words, the system is not only generating text, it is managing a process.

Take the example of a research task. A basic assistant may produce a decent summary in one pass, based only on its internal knowledge. An agentic system approaches the same task differently. It may look for recent sources, organize the information into themes, draft the first version, review weak sections, improve them, and only then assemble the final result. Each step informs the next one.

What makes this approach powerful is iteration. The system acts, observes the result, and uses that feedback to decide what should happen next. This is much closer to how humans solve complex problems: we test ideas, notice what works, correct what does not, and gradually improve the output.

The Five Essential Agentic Workflow Patterns

These five patterns do not compete with one another. They are often combined in real systems, depending on the task, the level of uncertainty, and the quality bar required.

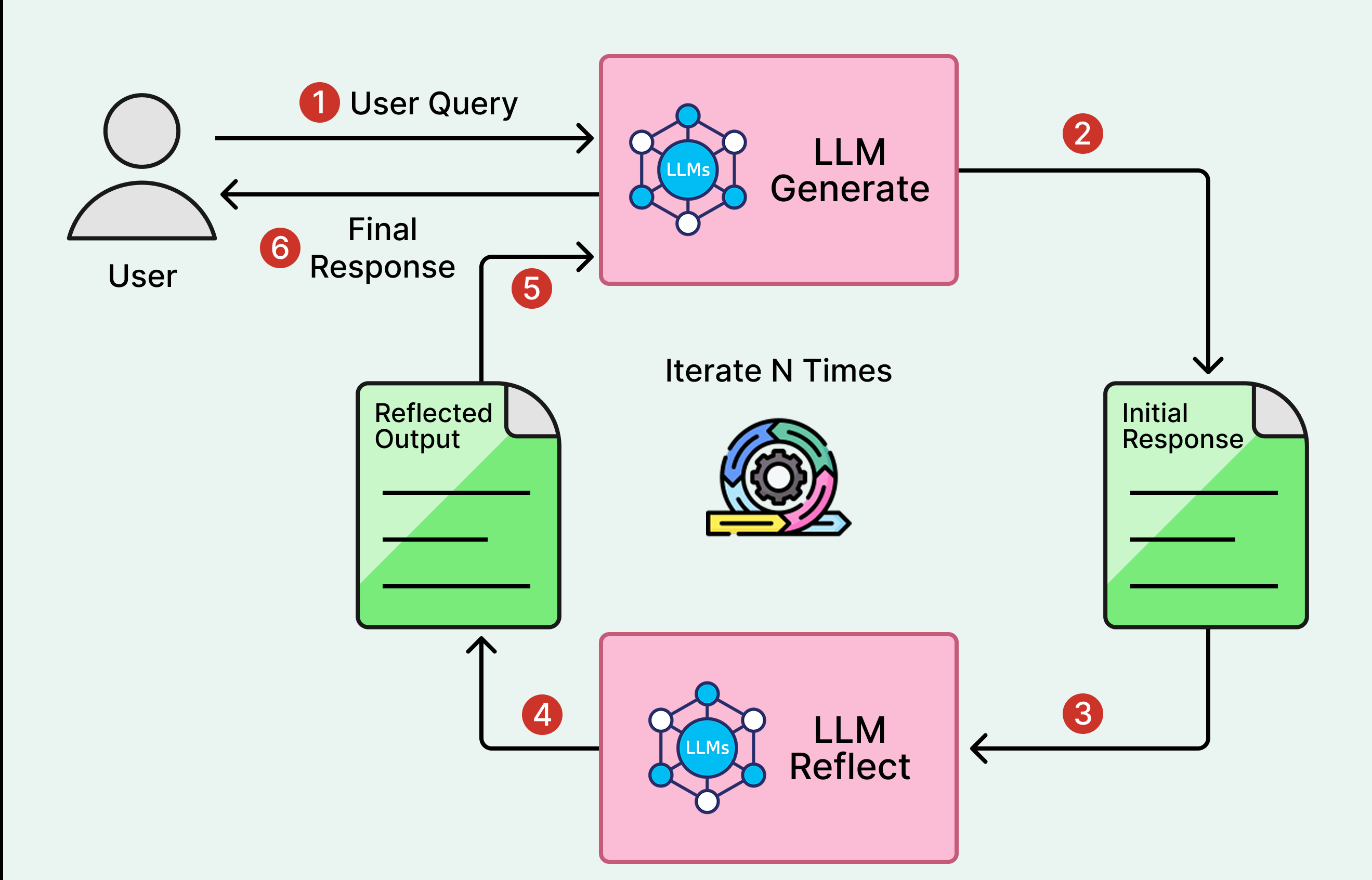

1. Reflection: the self-improving pattern

Reflection introduces a simple but powerful loop: generate, critique, revise. Instead of treating the first answer as final, the agent reviews its own output, identifies weaknesses, and produces an improved version. Even a lightweight reflection step can significantly increase quality.

The key benefit is not magic reasoning, but structured revision. The first draft may contain ambiguity, missing detail, weak logic, or poor wording. Reflection creates space to detect these issues before the result is exposed to the user.

This pattern becomes even more useful when the critique is specialized. A reflection step can focus on factual accuracy, clarity for a non-expert audience, tone consistency, coding mistakes, security risks, or performance concerns. The more clearly the review objective is defined, the more effective the improvement cycle becomes.

Reflection works especially well when quality matters more than raw speed. For straightforward factual requests, it may be unnecessary overhead. But for writing, code generation, analysis, or decision support, it is often one of the highest-leverage additions you can make.

Reflection loop: generate, critique, revise

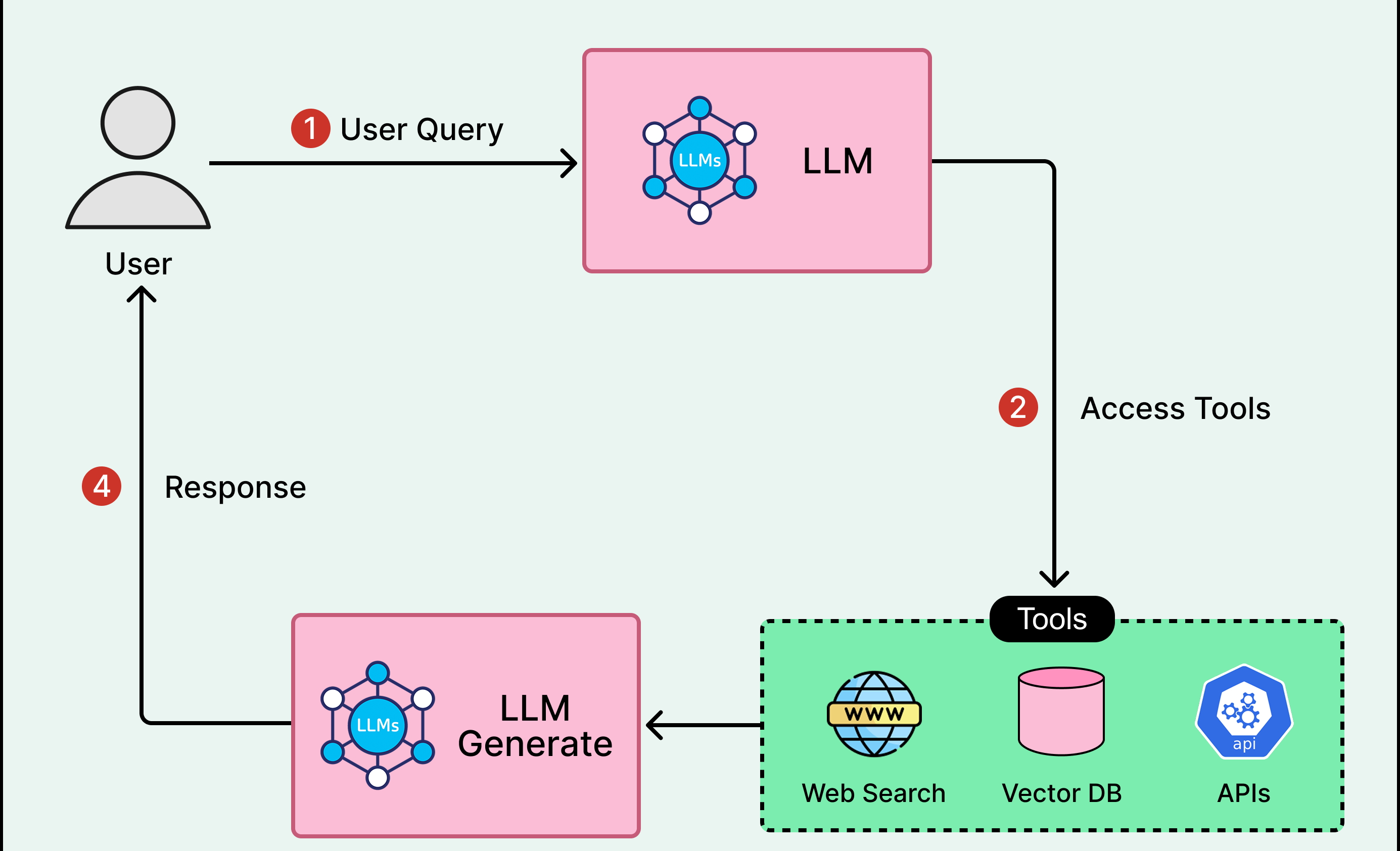

2. Tool Use: extending the agent beyond its training data

A standalone language model is limited by what it already knows and by what it can infer in pure text. It cannot fetch live information, call an external service, inspect a private database, or execute code unless those capabilities are provided through tools.

The tool use pattern equips an agent with external functions such as web search, APIs, calculators, code interpreters, database queries, or file access. The important shift is that the agent chooses when to call these tools instead of following a rigid script.

That flexibility changes the game. If a task requires current data, the agent can search for it. If it needs numerical precision, it can run code. If it must interact with another system, it can call the relevant API. The model is no longer limited to describing actions, it can actually perform them through an execution layer.

Tool use is especially powerful when combined with retry and adaptation. A poor search query can be reformulated. A failing API request can trigger an alternative path. A partial result can lead to new tool calls. This makes tool-enabled agents far more resilient than one-shot automation.

Tool use: act through an execution layer

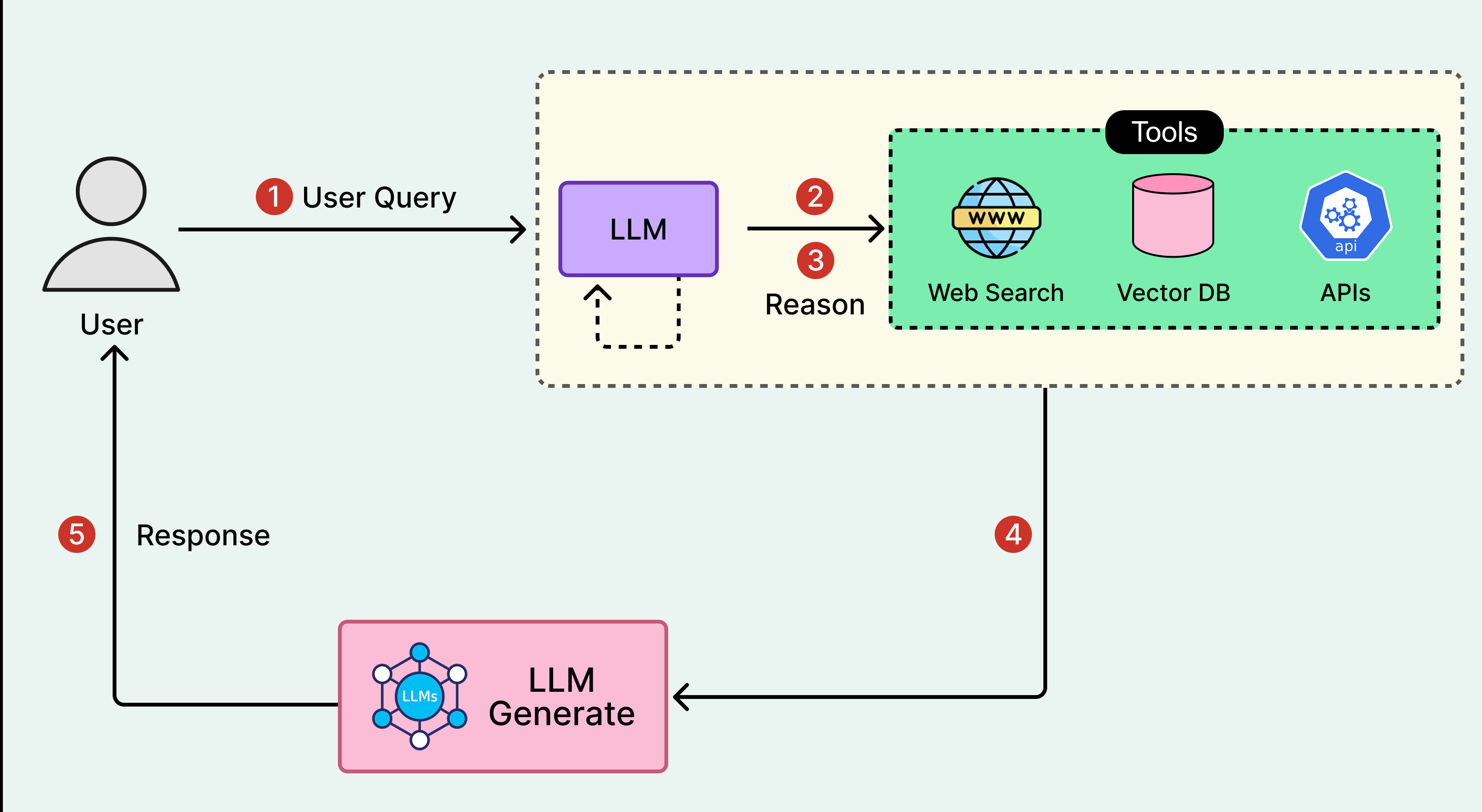

3. ReAct: alternating between reasoning and action

ReAct, short for Reason and Act, combines explicit reasoning with iterative execution. Instead of planning everything up front or acting blindly, the agent alternates between thinking about the next step and taking that step.

A typical ReAct loop looks like this: the agent reviews the current state, identifies what is missing, chooses the most useful next action, executes it, observes the outcome, and reasons again. This repeated sequence creates a flexible and grounded problem-solving process.

One of ReAct's main strengths is that the reasoning trail helps keep the system aligned with the goal. It also makes adaptation easier. When an action fails or produces unexpected results, the agent can explicitly reassess the situation instead of continuing in the wrong direction.

Compared with pure planning, ReAct is more adaptive. Compared with pure execution, it is more deliberate. That balance makes it a strong pattern for open-ended tasks where the agent needs to discover information while it works.

ReAct: alternate reasoning and action

4. Planning: structuring execution before acting

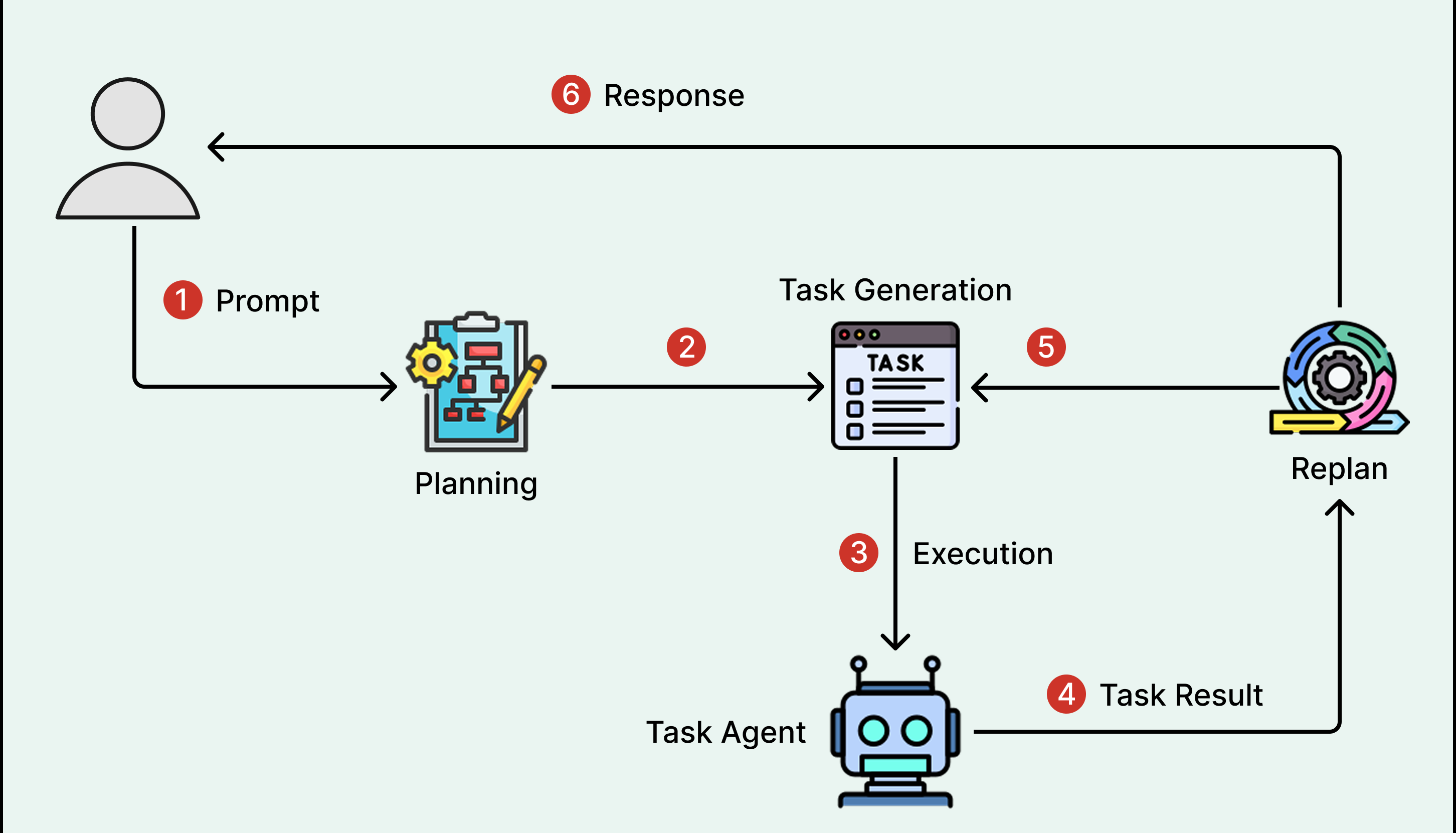

The planning pattern emphasizes decomposition before execution. Rather than jumping directly into action, the agent first translates a broad objective into a sequence of smaller tasks, dependencies, and milestones.

This matters because many real-world tasks are not just difficult, they are organized. Some steps must happen before others. Some tasks can run in parallel. Some require specific tools, documents, or approvals. Planning makes those constraints explicit before execution begins.

A good planning step reduces waste, avoids redundant work, and creates a clearer path to completion. It is especially valuable when backtracking is costly, when the task involves several phases, or when coordination matters across multiple subtasks.

That said, planning is not always the right default. For short and linear tasks, it can add unnecessary overhead. And when the environment is highly uncertain, an elaborate plan may become obsolete quickly. In those cases, lighter-weight approaches such as ReAct may be more effective.

Planning: structured execution with milestones

5. Multi-Agent Systems: collaboration through specialization

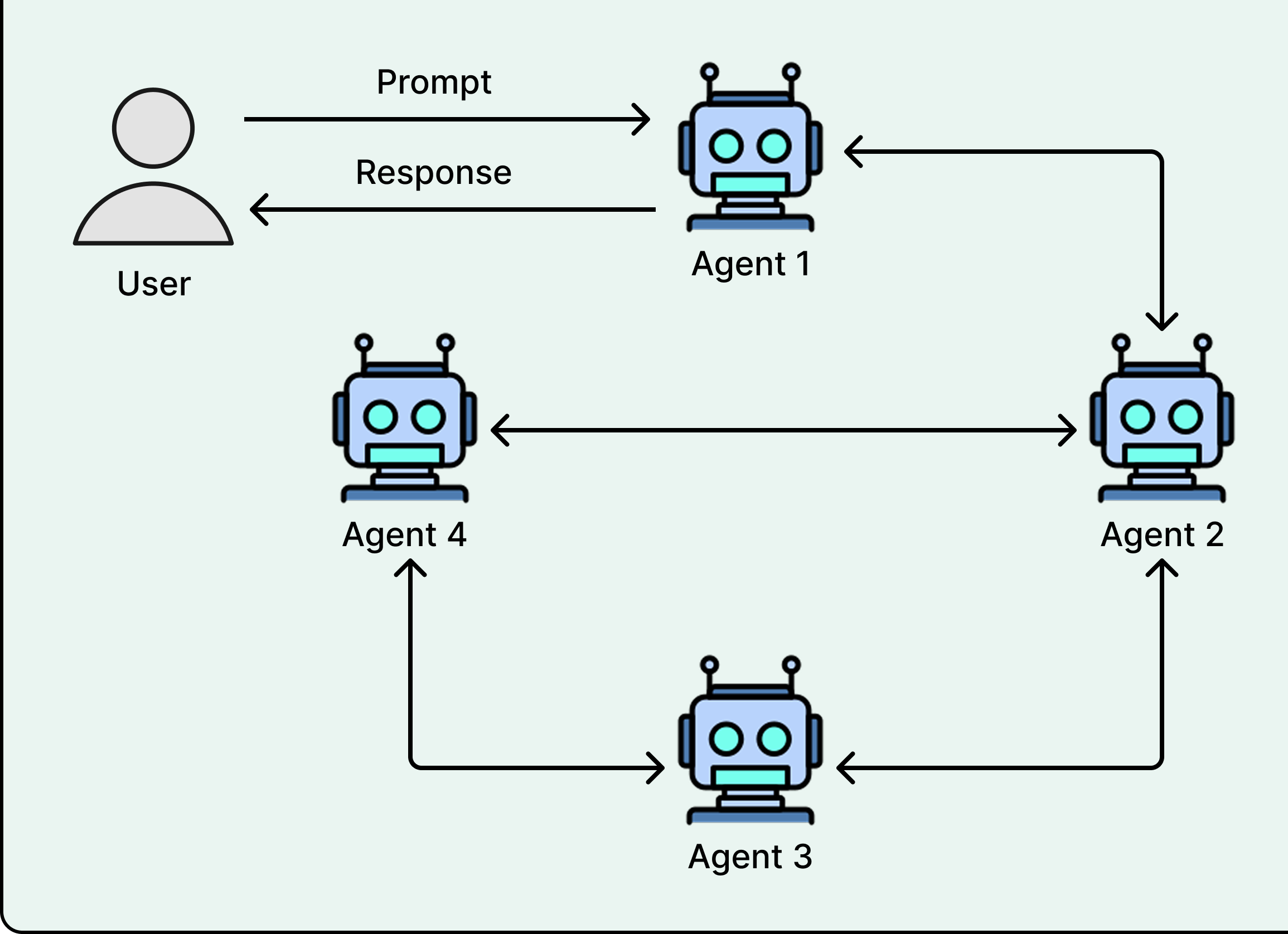

The multi-agent pattern distributes work across several specialized agents instead of asking a single system to handle everything alone. Each agent can focus on a role: research, coding, reviewing, analysis, orchestration, or validation.

This mirrors how strong human teams operate. A specialist often performs better in a narrow domain than a generalist trying to optimize for every requirement at once. In AI systems, the same idea can improve depth, reliability, and coverage.

A common setup includes specialist agents, critic agents, and a coordinator. Specialists produce domain-specific work. Critics challenge or verify the output. The coordinator ensures that tasks are routed correctly and that the final result remains coherent.

Of course, multi-agent design introduces trade-offs. More agents mean more orchestration, more communication rules, and more debugging complexity. For small tasks, the overhead is rarely justified. But for complex workflows requiring multiple perspectives or skill sets, the gains can be substantial.

Multi-agent: specialization and coordination

Conclusion

Agentic workflow patterns represent a major shift in how we design AI systems. The value no longer comes only from prompt quality, but from how the system organizes reasoning, execution, validation, and collaboration over time.

Reflection improves quality through revision. Tool use expands what the system can actually do. ReAct connects reasoning with adaptive execution. Planning adds structure to complex objectives. Multi-agent design introduces specialization and complementary viewpoints.

The strongest AI systems often combine several of these patterns instead of relying on only one. Understanding when to use each one is therefore a practical design skill for anyone building modern AI products, assistants, or internal automation systems.

Written by

Youssef LAIDOUNI

Full Stack Engineer | Java • Angular • PHP | APIs, MVP, Performance & Automation